Matching a transformer to its anticipated load is a critical aspect of reducing energy consumption.

In 2002, NEMA issued a Standard TP-1 in support of the U.S. Dept. of Energy’s guidelines for more energy efficient electrical devices. This standard was based on a previous U.S. Environmental Protection Agency study showing that the typical dry type transformer under normal operating conditions was loaded to approximately 35% of its nameplate rating. Therefore, TP-1 established a table of minimum efficiencies for various sized transformers when operating linear loads (see Table 1). These efficiencies are really quite incredible as they range from 97% to 98.8%. What TP-1 does not tell you is that it is very unlikely that you will ever see such efficiencies in actual installations. In addition, TP-1 does not tell you that using these very efficient transformers will impact your electrical designs significantly.

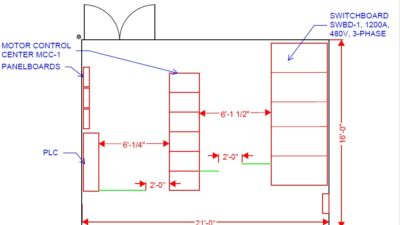

Because of the differences among the efficiencies shown in TP-1 and what really happens with real transformers in real applications, the approach you take in your electrical design could be significantly different when attempting to design an electrical system with minimized losses. This article offers suggestions regarding how you approach your electrical designs to maintain minimum losses in the system transformers (see Figure 1). It will also show areas in which you will have greater losses than those shown in TP-1—no matter which design direction you might choose.

Linearity

TP-1 was developed using linear loads. However, in today’s business environment, most of the loads are nonlinear (rich in harmonic content). Computers, fluorescent light fixtures, printers, elevators, or variable frequency drives for motors generate harmonics. Applying harmonically rich loads to transformers can double or triple their total losses. For example, a 75 kVA transformer that would normally have 2% losses at 35% loading would actually have 4% to 6% losses. Therefore, the 26 kVA load (35% of the 75 kVA) would have losses totaling more than 1.5 kW.

Core and coil losses

Transformer losses are a combination of core losses and coil losses. The core losses consist of those generated by energizing the laminated steel core. These losses are virtually constant from no-load to full-load, and for the typical 150 C rise transformer are about 0.5% of the transformer’s full-load rating. The coil losses are also called load losses because they are proportional to the load on the transformer. These coil losses make up the difference between the 0.5% losses for the core and range from 1.5% to 2% of the total load.

Typically, the total losses for a 75 kVA transformer are about 1,000 W at 35% loading or 1.3%. The actual losses when the transformer is fully loaded can be more than 3,000 W for linear loads and 7,000 W for nonlinear loads. This amounts to 4% and 9.3% respectively—considerably more than the NEMA TP-1 table for minimum efficiencies for a 75 kVA transformer. While the overall concept for requiring more energy-efficient transformers is quite good, engineers may want to be very careful about transformer selection when the anticipated operating conditions do not match the base criteria that were used in developing the TP-1 table.

By selecting transformers with lower temperature ratings, that is, 115 and 80 C rise instead of the standard 150 C rise transformers, the core and load losses will change. To reduce the temperature rise, the core is increased in size. This increases the core losses but reduces the load losses, so, according to the anticipated operating point, the total losses may be higher or lower than the standard transformer. Due to the smaller core losses, the total losses for the 150 C transformer are less than the total losses of the 80 C transformer up to about 60% loading. With transformer loading above 60%, the total losses are less than those of a 150 C transformer of the same size (see Figure 2).

A good compromise between core and load losses is the 115 C rise transformer. While the core losses are somewhat higher than those in the 150 C transformer, they are less than the 80 C transformer core losses. Correspondingly, the load losses are less than the 150 C transformer, allowing the total losses to be less than those of the 150 C transformer under normal operating conditions (see “Know the loss data, loading when specifying transformers”).

Distribution transformers and TP-1

Transformers that have primary voltages of 34.5 kV or less and secondary voltages of less than 600 V also must meet the efficiency ratings of TP-1 at a linear loading of 35%. However, TP-1 covers only 3-phase distribution transformers between 15 kVA and 1,000 kVA, so the larger transformers are not addressed by this standard. In addition, the distribution transformers are traditionally designed to be loaded to between 50% and 75%. As noted previously on the smaller, dry-type transformers, loadings that exceed the 35% TP-1 point will have significantly greater losses than the tabularized values. So while the goal of TP-1 was very lofty, it does not apply as well to actual installations.

Historically, it was common to see distribution transformers that had impedance ratings of 5.75%. As the electrical utilities have worked to reduce their operating costs, the impedance for distribution transformers has dropped to values as low as 1.5% impedance. Since the utilities typically absorb the transformer losses as a part of their operating costs, reducing the impedance percentage from 5.75% to 1.5% has saved more than 70% of their losses at the transformer. This turned out to be very convenient because TP-1 was requiring that these transformers have higher efficiencies at the same time that the utilities were attempting to reduce their operating costs.

This process had a negative side effect that was not immediately evident but had a major impact on the electrical engineer’s design: the available fault duty on the secondary of the transformer. A 1,000 kVA transformer with 5.75% impedance will have an available fault duty of 21,000 A at 480 V, assuming an infinite bus on the primary side. Given the same criteria for a 1.5% impedance transformer would result in an available fault duty of 80,000 A. The same transformer operating with a 120/208 V secondary will have available fault duties of 48,000 A and 185,000 A, respectively. This operating efficiency improvement has a major impact on the electrical system design, particularly at the lower secondary voltage of 120/208 V (see “Sizing stepdown transformers”).

While TP-1 did not address transformers that were rated larger than 1,000 kVA, there have been similar reductions in their impedances to affect matching savings for these larger transformers. As one would anticipate, available fault currents for a 2,500 kVA transformer are dramatically, though proportionally, larger. At 480 V, the fault duties would increase from 52,000 A to more than 200,000 A for a 1.5% impedance transformer. Thank goodness transformers of this size do not commonly come with a 208-V secondary, because the fault current would approach 500,000 A.

Application

In the engineer’s quest to reduce energy consumption, matching the transformer to its anticipated load is critical in achieving that goal. By applying a 150-C rise transformer to a lightly loaded linear circuit, the losses noted in TP-1 will be very close to the actual losses. However, heavier transformer loadings will suggest the engineer design around one of the lower temperature-rise transformers, such as the 115 C or 80 C transformers. When there are significant harmonically rich loads that are to be fed by a dry transformer, the lowest losses are likely to be achieved by using K-rated transformers that are sized for the anticipated harmonic currents.

Injudicious transformer selection can exceed the losses shown in TP-1 by 300% to 400%, resulting in a negative return on investment for the increased cost of the higher efficiency transformers.

Know the loss data, loading when specifying transformers

In researching this article, the author found it quite interesting that published loss data for all of the major manufacturers queried was virtually nonexistent. When asking about losses for operating points other than the 35% loading for TP-1, there appeared to be nothing available. Also, loss data for transformers operating at 80-C rise, 115-C rise, and K-rated transformers were also unavailable. Asking your local transformer sales representative for loss data at the operating point to which you are designing and for the type of transformer that you are designing around may save your client many dollars in energy savings. However, inserting the standard 150-C rise transformer into your design, while planning on operating the transformer at a point other than 35% loading and with a significant percentage of nonlinear loads, could cost your client significantly over the life of a transformer.

Sizing stepdown transformers

One cautionary note on stepdown transformers: When transforming from 480 V to 120/208 V, these low-loss, dry-type transformers can sneak up on your design. With the higher impedances of yore, an engineer usually did not have to worry about having higher interrupting duty branch circuit breakers when they were connected downstream from a dry transformer and the rest of the distribution system was a fully rated system. With the lower impedances, transformers as small as 112.5 kVA can have available fault duties that would require the use of breakers with an interrupting duty of more than 10,000 A. When using dry transformers such as a 300 kVA, 480/120/208 V, available fault duties can exceed 40,000 A, requiring your electrical design to use 65,000 AIC breakers. It is better to break the 120/208 V load into small divisions so that the maximum transformer size does not exceed 75 kVA with an impedance of at least 2% and the lower interrupting breakers (read: less expensive) may be used.

Lovorn is president of Lovorn Engineering Assocs. He is a member of the Consulting-Specifying Engineer editorial advisory board.