There are many ways to cool a data center. Engineers should explore the various cooling options and apply the solution that’s appropriate for the application.

Learning objectives:

- Apply the various ways to cool a data center.

- Decide which data center cooling options work best in each part of the world.

- Model different ways to design a data center by making use of various cooling technologies.

The Slinky and IT systems. Can there really be common attributes between a spiral metal toy invented in the 1940s and a revolutionary technology that impacts most people on this planet? This author thinks so. If you have ever played with a Slinky or seen one in action (especially on stairs), you’ll see that as it travels on the stairs, the mass of the spring moves from the trailing end of the Slinky to the leading end. This shifting of the mass causes the trailing end to eventually overtake the leading end, jumping over it and landing on the next stair. If the staircase went on forever, so would the Slinky.

The meaning behind this visual metaphor is that as soon as things seem to settle out in the information technology (IT) sector, some other disruptive force changes the rules and leapfrogs right over the status quo into completely new territory. This is also the vision this author has in his head when thinking about cooling systems for data centers, which have very tight relationships to the IT systems. By the time the HVAC equipment manufacturers have it figured out, the IT industry throws them a curveball and creates a new platform that challenges the status quo in maintaining the appropriate temperature and moisture levels inside the data center. But this is not new—the evolution of powerful computers has continually pushed the limits of power-delivery and cooling systems required to maintain their reliability goals.

A brief history of (computer) time

Early in the development of large, mainframe computers used in the defense and business sectors, one of the major problems that the scientists and engineers faced (and still do today) was the heat generated by the innards of the computer. Some resorted to removing windows, leaving exterior doors open, and other rudimentary approaches that used ambient air for keeping things cool. But others had already realized that water could be a more practical and operationally viable solution to keep the computers at an acceptable temperature. Different generations of computers demonstrate this point:

- UNIVAC, released in 1951, demanded 120 kW and required 52 tons of chilled water cooling. It had an electrical density of 100 W/sq ft.

- Control Data Corp.’s CDC 7600 debuted in the early 1970s and consumed 150 kW. (The primary inventor of the CDC 7600 went on to found Cray Computers). It was benchmarked at 10 megaflop/sec and used an internal refrigerant cooling system that rejected heat to an external water loop. (FLOPS, or flops, is an acronym for floating-point operations per second) It had an electrical density of 200 W/sq ft.

- In 1990 IBM introduced the ES/9000 mainframe computer, which consumed 166 kW. It is interesting to note that 80% of the machine’s heat is dissipated using chilled-water cooling. The remaining heat is rejected to the air. This system had an electrical density of 210 W/sq ft.

- The first part of the 21st century ushered in some pretty amazing advances in computing. In 2014, Hewlett-Packard announced a new high-performance computer—also water-cooled—that is capable of 1.2 quadrillion calculations/sec peak performance ("petascale"-computing capability, which is defined as 1,015 flops). Computing power of this scale designed for energy and space efficiency yield a power density of more than 1,000 W/sq ft.

Moving toward today’s technology

One can glean from this information that it wasn’t until the late 20th/early 21st century that computing technology really took off. New processor, memory, storage, and interconnection technologies resulted in more powerful computers that use less energy on a per-instruction basis. But one thing remained constant: All of this computationally intensive technology, enclosed in ever-smaller packages, produced heat—a lot of heat.

As the computer designers and engineers honed their craft and continued to develop unbelievably powerful computers, the thermal engineering teams responsible for keeping the processors, memory modules, graphics cards, and other internal computer components at an optimal temperature had to develop innovative and reliable cooling solutions to keep pace with this immense computing. For example, modern-day computational science may require a computer rack that houses close to 3,000 cores, which is roughly the equivalent of 375 servers, in one rack. This equates to an electrical demand (and corresponding cooling load) of 90 kW per rack. This will yield a data center with an electrical density of considerably more than 1,000 W/sq ft, depending on the data center layout and the amount of other equipment in the room. With numbers like this, it was clear: Conventional air cooling will not work in this type of environment.

Current state of data center cooling

Data center cooling system development, employing the most current and common industry methodologies, range from split-system, refrigerant-based components to more complex (and sometimes exotic) arrangements, such as liquid immersion, where modified servers are submerged in a mineral oil-like solution, eliminating all heat transfer to the ambient air because the circulating oil solution becomes the conduit for heat rejection. Other complex systems, such as pumped or thermo-syphon carbon-dioxide cooling also offer very high efficiencies in terms of volume of heat rejection media needed; 1 kg of carbon dioxide absorbs the same amount of heat as 7 kg of water. This potentially can reduce piping and equipment sizing, and also reduce energy costs.

Water-based cooling in data centers falls somewhere between the basic (although tried-and-true) air-cooled direct expansion (DX) systems and complex methods with high degrees of sophistication. And because water-based data center cooling systems have been in use in some form or another for more than 60 yr, there is a lot of analytical and historical data on how these systems perform and where their strengths and weaknesses lie. The most common water-based approaches today can be aggregated anecdotally into three primary classifications: near-coupled, close-coupled, and direct-cooled.

Near-coupled: Near-coupled systems include solutions including rear-door heat exchangers (RDHX), where the cooling water is pumped to a large coil built into the rear door of the IT cabinet (see Figure 1). Depending on the design, airflow-assist fans can also come integrated into the RDHX. This heat-removal design reduces the temperature of the exhaust air coming from the IT equipment (typically cabinet-mounted servers). The temperature reduction varies based on parameters, such as water temperature, water flow, airflow, etc. However, the goal is to reduce the temperature to as close to ambient as possible. For example, with an inlet air temperature of 75 F and a water flow of 1.3 gpm/ton, using 66 F chilled water will cool 85% of the heat in the server cabinet to room temperature. With 59 F chilled water, 100% of the heat will be cooled to room temperature. It is assumed that the cooling water for the RDHX is a secondary or tertiary loop with a 2 F increase in water temperature from the chilled water temperature. This solution has been in use for several years and tends to work best for high-density applications with uniform IT-cabinet-row distribution. Because the RDHX units are typically mounted on the back of the IT cabinet, it does not impact the floor space.

Close-coupled: Close-coupled water-based cooling solutions include water-cooled IT cabinets that have coils and circulation fans built into the cabinet (see Figure 2). This system allows for totally enclosed and cooled IT equipment where the heat load is completely contained, with only a small amount (~5%) of the heat being released into the data center. This system has similar limitations to the RDHX, but generally is more feasible to neutralize the entire cooling load. The cabinet is larger than a standard IT cabinet. However, because the equipment is enclosed, it is possible to mount higher densities of IT equipment in the cabinet due to the ability of the cabinet to maintain a much more uniform temperature, essentially eliminating any chance of a high-temperature cutout event. This solution also has been in use for several years and tends to work best for high-density applications, especially when the equipment is located in an existing low-density data center.

Direct-coupled: One of the primary challenges when cooling a data center is the ability to control how effectively the cooling load can be neutralized. A data center that uses cold air supplied to the room via raised floor or ducting comes with inherent difficulties, such as uncontrolled bypass air, imbalanced air delivery, and re-entrainment of hot exhaust air into the intakes of the computer equipment—all of which will usually present difficulties in keeping the IT equipment at allowable temperatures.

Most of these complications stem from proximity and physical containment; if the hot air escapes into the room before the cold air can mix with it and reduce the temperature, the hot air now becomes a fugitive and the cold air becomes an inefficiency in the system. In all air-cooled data centers, a highly effective method for reducing these difficulties is to use a partition system as part of an overall containment system that physically separates the hot air from the cold air, allowing for a fairly precise cooling solution. On a macro scale, it is possible to carefully control how much air comes into and out of the containment system and predict general supply and return temperatures. What cannot be done in this type of system is to ensure that the required airflow and temperature across the internal components in the computer system are being met. As individual server fans will vary their speed to control internal temperatures (based on workload), it is possible to starve air, especially if the workload is small, causing the internal fans to go to minimum speed.

Next generation

In computing applications where the electrical density is at a point where industry-standard cooling solutions can no longer properly cool the equipment, using alternative methods, such as direct water cooling, becomes necessary. There is no hard-and-fast rule on the electrical-density cutoff when air cooling starts to become ineffective. A data center with a homogeneous IT equipment layout and optimized air distribution will be able to use air cooling to a much higher threshold than a heterogeneous data center with legacy IT equipment and less-than-optimal air distribution. Obviously, this is an IT-equipment-driven outcome, falling outside the purview of the facility’s HVAC engineering team. When the opportunity arises to develop a design for a direct-water-cooled computing system, it is essential to understand some important concepts and strategies.

Direct-cooling strategy: There are several approaches used by different manufacturers, such as a direct supply of water to a heat sink attached to the computer’s internal components, specifically the processor and memory modules. Another approach is to use a dry-type connection where the water stays outside of the server. The heat-transfer process is achieved by using thermal plates connected to heat pipes that are also connected to plates on the processor, memory, and other internal components. The other side of the thermal plate is connected to the cooling water.

Water temperature: The term "cooling water" is a misnomer in that the supply water can be as warm as 85 F to 105 F. The terms "warm-water cooling" or even "hot-water cooling" have emerged, even though each appears to be a contradiction in terms. This is really the centerpiece of water-cooled-computer energy efficiency. Because the internal components of a computer generally can happily operate far above 150 F, using higher-temperature water works great. Here’s the clincher: Using these water temperatures to cool computers can eliminate the use of compressorized cooling equipment. In most climates—even very warm ones—the energy use associated with traditional data center cooling solutions is significant because the energy use associated with compressorized cooling (chillers, condensing units, etc.) will be 50% or more of the total HVAC energy use.

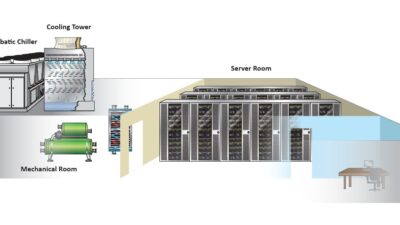

Geography: Although most climates allow for production of warm water used for cooling computers without compressors, the type and size of the heat-rejection system will depend on the distribution of the dry-bulb and wet-bulb temperatures. Analyzing climatic data early on in the project will provide guidance on the location, physical size, and capacity of the cooling towers, evaporative coolers, dry coolers, or whatever the heat-rejection schema is (see Figure 3).

Water distribution: Typically, cooling loops in the computers will be isolated from the base building water loop via a heat exchanger. Knowing that there would typically be a primary/secondary arrangement on the house-cooling water distribution system, the computer water loops become tertiary. Contingent on the computer manufacturer, a cooling distribution unit (CDU) will provide the connection to the house secondary loop and the water pumping through the piping within the computer. In addition to the physical requirements of the CDU, like piping and electrical connections, the energy use of the CDU must be included in any energy-use metric, such as power usage effectiveness.

Air-cooled equipment requirements: While the water-cooled computer may be the largest cooling load in the data center, there will most likely be IT and electrical equipment that still rely on air cooling. In addition, many of the water-cooled computers still have a small percentage of the cooling load (radiated heat) that must be air cooled. This necessitates the use of computer-room air-handling units (AHUs) or central AHUs. These units can be stand-alone, split-system DX units, or—if the facility is large enough—a small, separate chilled-water system can be installed. Regardless of the final design, the energy-use estimates must account for these systems.

Cooling-system redundancy: Driven by the IT equipment redundancy requirements, the cooling system must have built-in mechanisms for continuity of operation if there is a loss of power or a major equipment failure. Due to the diameter and length of the piping in a large water-cooled computer facility, the cooling-water piping can act as a storage tank that continues to circulate water (as one part of the overall reliability strategy) until the outage is corrected.

Fan energy: There is another component of data center energy use that is influenced by the use of water-cooled computers: fan energy for the facility air-handling systems. Depending on the type of water-cooled computer, a certain percentage of the heat will be dissipated into the data center ambient environment, and not picked up by the cooling water. This amount of heat will range from approximately 5% to 30% of the total IT cabinet load. This cooling load will be taken care of by the house AHUs. This means that 70% to 95% of the fan energy of a fully air-cooled data center will be eliminated. And because the fan motor energy will be the first or second highest energy use, it is a very significant energy-reduction strategy.

Next steps

It is interesting that many of the first widely distributed water-cooled computers, such as the IBM ES/9000, were used in the business world. But over time, the focus has seemed to change. There seems to be a rekindled interest in using water-cooled computers for new high-performance computing systems. To keep things in perspective, the percentage of high performance computing (HPC) systems classified as "industry" on the Top500 List is 22%; the percentage of systems listed under the categories "government/academic/research" is 76%. Clearly, the number of HPC systems for nonindustry use dominates the list. However, looking at it from a different angle, if one adds up all of the peak-power demands of the systems on the list and analyzes the percentage breakdown, the gap shrinks significantly. The percentage of total power of "industry" HPC systems on the Top500 List is 46%; the systems listed under the categories "government/academic/research" is 52%. These numbers could be a signal that private industry has the ability to fund HPC systems to a higher level.

Another important organization in the HPC/supercomputing world is The Green500. The purpose of The Green500, similar to the Top500, is to provide a ranking of the most energy-efficient supercomputers in the world. Over the years, the result of placing an emphasis on computer speed and throughput without much consideration for electrical consumption, cooling-system energy, or overall environmental impact has been an extraordinary increase in the long-term operating cost of the supercomputing installation. As The Green500 puts it, " … [We] encourage supercomputing stakeholders to ensure that supercomputers are only simulating climate change and not creating climate change."

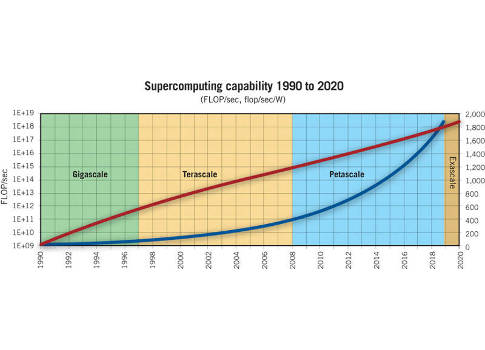

On July 29, 2015, President Obama signed an executive order, "Creating a National Strategic Computing Initiative." This order establishes the National Strategic Computing Initiative (NSCI). "The NSCI is a whole-of-government effort designed to create a cohesive, multi-agency strategic vision and federal investment strategy, executed in collaboration with industry and academia, to maximize the benefits of HPC for the United States." One of the tenets of the order is to continue to reinforce requirements that HPC systems must improve in performance and efficiency (see Figure 4).

Less than a decade ago, hitting petaFLOP/s performance was the absolute high-water mark. Now computer engineers, scientists, and researchers are coming close to reaching "exascale" computing. Scientists believe that exascale is the order of processing power of the human brain at the neural level. And, unbelievably, these same people are already looking at "zettascale" computers. However, the onset of big data has given business/enterprise users the ability to capture, store, compute, analyze, and visualize very massive data models, similar to the HPC industry. Perhaps it is just a matter of time before water-cooled computers fully penetrate the business ecosystem and gain a foothold based on computing- and data-intensive business models. So, when this happens, water-cooled computers will have finally come full circle.

More computing power

What all of this massive amount of computing power is used for. Organizations will be able to:

- Design new products faster and more efficiently

- Simulate and anticipate multidimensional phenomena such as climate change

- Analyze massive data flows in real time

- Identify threats to national security

- Provide crisis management.

Simply put, these computing systems will enable applications to capture, store, compute, analyze, and visualize very massive data models as fast as possible.

Bill Kosik is a distinguished technologist, Data Center Facilities Consulting at Hewlett-Packard Co. He is a member of the Consulting-Specifying Engineer editorial advisory board.