An expert panel provides engineering and design tips for HVAC systems in this Q&A

Respondents

- Peter Czerwinski, PE, Uptime ATD, Mechanical Engineer/Mission Critical Technologist, Jacobs, Pittsburgh

- Garr Di Salvo, PE, LEED AP, Associate Principal – Americas Data Center Leader, Arup, New York

- Scott Gatewood, PE, Electrical Engineer/Project Manager, Regional Energy Sector Leader, DLR Group, Omaha, Neb.

- Brian Rener, PE, LEED AP, Mission Critical Leader, SmithGroup, Chicago

What unique cooling systems have you specified into such projects?

Peter Czerwinski: I worked on a data center project with a client who was willing to accept higher IT rack intake air temperatures to achieve a low PUE and high energy savings. However, despite the high limit of ASHRAE TC 9.9 Class A2 upper limit of 95°F, the project’s location experiences some hours of high ambient wet bulb temperatures that require some amount of mechanical cooling to achieve the temperature setpoint. This complicated the system layout and redundancy, so we specified air-cooled chillers with integrated free cooling which provided the owner with a high amount of free cooling hours while maintaining redundancy and the ability to maintain the maximum air temperature limit year-round.

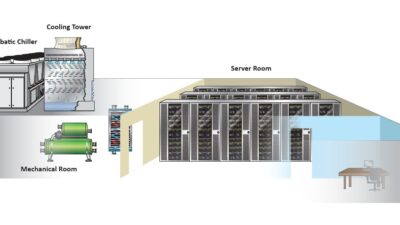

Garr Di Salvo: Conventional mechanical cooling systems still dominate the data center world, comprising the bulk of existing inventory. A tried and tested technology, refrigeration-based systems offer a solution that can be applied in a wide variety of locations. Many of the largest scale facilities have moved toward evaporative solutions, which take advantage of the full operational capabilities of modern IT equipment and offer tremendous savings in energy and water use. To provide maximum flexibility for future needs, the Lawrence Berkeley National Laboratory sought a facility that could not only accommodate both adiabatic and mechanical options but also allow for future delivery of water to the cabinet.

What unusual or infrequently specified products or systems did you use to meet challenging cooling needs?

Peter Czerwinski: With high IT rack densities becoming the norm, liquid cooling becomes an attractive option to data center owners who value fitting as much IT capacity as possible in the least amount of space. Liquid cooling can be deployed via rear door heat exchangers, in-row cooling, direct-to-chip cooling or a combination of several technologies. A recent project’s cooling needs were achieved by fitting all their racks with rear door heat exchangers, allowing the owner to operate with high density racks while maintaining reliability.

Brian Rener: Thermosyphons are used with water-cooled servers or as a supplement to cooling tower-based heat rejection of chilled water systems. Acting as a radiator, thermosyphons use refrigerant to reject water heat to atmosphere like a radiator. At cooler times of the year, thermosyphons operate in lieu of cooling towers to provide reduced energy cooling with no water usage. The National Renewable Energy Laboratory Energy Systems Integration Facility data center in Golden, Colo., consists of water-cooled racks. The building uses a thermosyphon in conjunction with cooling towers to operate with a PUE of 1.04. The application of the thermosyphon maintained operating efficiencies while cutting the water usage in half.

Describe a project in which the building used free cooling.

Garr Di Salvo: Most of the greenfield projects we see use some form of free cooling. Evaporative cooling offers tremendous energy and water savings and is employed on many of the hyperscale projects we are involved with in North America and Europe. In Curitiba, Brazil, climate, water availability and air quality concerns demanded an alternate approach. There we employed a hybrid air-cooled approach that incorporated pre-cooling of the return fluid before it reached the chiller compressor. Identifying a local source for this technology was key to the success of this build.

Peter Czerwinski: A recent data center project was designed to use air-cooled chillers with integrated free cooling. These chillers used ambient air to cool refrigerant which provided 100% capacity without compressors down at an ambient temperature of 40°F. This was an existing building that was previously used for laboratories and manufacturing. The building had multiple stories and limited space for new cooling equipment. By combining the economizer components within the chillers, the design was able to eliminate the need for dry coolers which take up a great deal of space. The data center design achieved an annualized average PUE of 1.15.

Brian Rener: The University of Utah’s data center consists of predominantly air-cooled servers and has the ability to operate with a free cooling outside air economizer. The high desert of Salt Lake City, Utah, has a minimum of high humidity days and with the elevated temperature range of the data center operates in free cooling economizer the majority of the year. A weather station monitors outdoor conditions and can minimize outside air for elevated humidity or poor air quality. The data center operates with a PUE of less than 1.3.

How have you worked with HVAC system or equipment design to increase a building’s energy efficiency?

Brian Rener: Data centers or large server rooms with hot aisle containment can take advantage of chilled water return for primary data center cooling with the ability to inject chilled water supply only as needed for high load conditions. This approach lowers the building pumping energy and expands the chilled water system temperature delta, allowing the chillers to operate more efficiently. At times of the year when the building does not require the colder chilled water, the entire chilled water system supply can be reset up to match that of the data center, increasing free or reduced energy cooling.

Garr Di Salvo: Existing systems can often be optimized to dramatically improve operational efficiency. At the then 16-year-old Massachusetts Information Technology Center, we used a set of add on transducers to supplement available facility measurements. Through a series of site surveys, operational assessments and system modelling, we were able to identify a set of energy conservation measures, including both “right-sizing” of major equipment (incorporated as part of a life cycle facility upgrades) along with changes to operational practices and airflow management. Together these reduced the facility energy use by 24% and water usage by 37%.

What best practices should be followed to ensure an efficient HVAC system is designed for this kind of building?

Garr Di Salvo: Some fundamental ways to ensure operational efficiency include using variable frequency drives or electronically commutated motors to regulate system flows in lieu of using constant speed pumps and motors with control valves and dampers. High-efficiency equipment can be selected and right-sized based on the load the profile of the facility and controls systems can be deployed to take advantage of the free cooling opportunities. Rigorous airflow management is also essential. Ideally, you want to provide a single path for water/airflow and include hot/cold aisle containment where possible.

Brian Rener: The use of hot aisle containment allows the data center air handling unit supply temperatures to be reset up to just below that of room temperature. This approach increases the opportunities for free cooling outside air economizer, if available and also increases the chiller efficiency.

What are some of the challenges or issues when designing for water use in such facilities?

Brian Rener: Large data centers to optimize energy use can consume a significant amount of water each year. Efforts to lower water usage by changing the system approach often come with a corresponding loss of energy efficiency. The best solutions take advantage of the evaporation of water during peak design periods, but have other equipment, thermosyphons, etc. that can operate efficiently during the cooler times of the year. Such a balanced approach can save both energy and water operating costs both now and in the years to come.

Peter Czerwinski: Care must be taken when utilities such as chilled water, domestic water or condenser water are to be in the vicinity of critical IT or electrical equipment. Piping should be installed with a drip tray with leak detection and spray guards should be provided at pipe joints.