In the information age, data centers can be the beating heart of not just a building, but an entire global corporation. The electrical engineer plays a key role in the design of these mission critical facilities.

Respondents

- Andrew Baxter, PE, Principal/MEP Engineering Director, Page, Austin, Texas

- Brandon Kingsley, PE, CxA, CEM Project Manager, Primary Integration Solutions Inc., Charlotte, N.C.

- Keith Lane, PE, RCDD, NTS, RTPM, LC, LEED AP BD+C, President/Chief Engineer, Lane Coburn & Associates LLC, Seattle

- Dwayne Miller, PE, RCDD, CEO, JBA Consulting Engineers, Hong Kong

CSE: Describe some recent electrical/power system challenges you encountered when designing a new building, or working in an existing building.

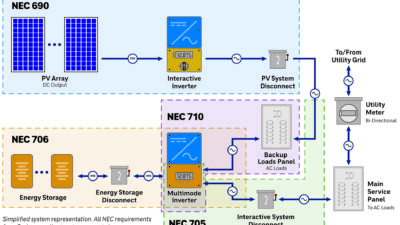

Lane: The standard backup solutions for mission critical environments include standby generators and UPS systems. It is critical to ensure that the standby generators are not overloaded under all failure conditions. Additionally, the generator must perform properly under all failure modes to avoid harmonic distortions. The engineer must specify a generator with the appropriate level of subtransient reactance. Also, it is a good idea to specify a generator that is "mission critical" rated for a load factor of 85%.

Baxter: We are seeing the implementation of shielding systems to protect facilities from EMP. This has created a challenge in the mission critical/data center industry in how to bring the large amount of power into such a facility. This requires large electrical filters at the shield penetrations and significantly limits the amount of back-and-forth through the shield, quite often requiring the design of separate power systems for inside and outside of the shielded areas.

Kingsley: Replacing critical electrical systems in any existing building is challenging for designers, constructors, installers, and commissioning agents. A lot of upfront coordination during design and construction must take place to ensure that the live data center does not have a disruption. This can require construction schedules and methods of procedure (MOP) that are detailed out to the hour for major outages. A key challenge for the commissioning agent is identifying what impact the equipment being tested will have on the data center if there is a failure during testing, and ensuring that a back-out plan is in place. In existing facilities, the available drawings may not be accurate, and additional field investigation is required along with multiple coordination meetings to identify all of the risks.

CSE: Discuss new construction data center projects, and the power usage effectiveness (PUE) numbers you’ve achieved.

Kingsley: We’ve commissioned new data center projects that have achieved PUE numbers around 1.05 to 1.1 for mechanical systems that use no mechanical refrigeration, that is, indirect or direct evaporative cooling systems.

Baxter: The data centers with which we have been involved over the past 3 years have had an annual PUE range of 1.3 at the high end and less than 1.09 in a facility that used no mechanical cooling for the critical loads. All of these facilities have used some sort of economizers to use free cooling from the ambient environment as much as absolutely possible. A critical feature common to all of these facilities was some sort of containment for the IT rack systems; whether it be hot aisle, cold aisle, or rack-based, they all had containment.

Lane: There are many design opportunities available to improve PUE. The use of more efficient UPS systems, module implementation, transformerless UPS systems, more efficient transformers, more efficient lighting and lighting controls, warmer cold aisle temperature, or hot aisle containment. Building with modular components also ensures that electrical components are loaded at levels that run more efficiently. For example, some 2N data centers that are lightly loaded could have loading percentages during normal operation that are less than 20%. UPS systems and transformers can run far less efficiently when loaded to 20% or less.

CSE: Have there been recent changes to wiring, cabling, or cable rack designs? If so, describe these changes.

Kingsley: As commissioning agents, we’ve noticed that wiring, cabling, and cable rack designs vary based on each client’s preference. The biggest change is the amount of cabling being installed, especially in cloud data centers. We are seeing large increases in the quantity of fiber being installed to increase network reliability, as well as flexibility for future expansion, and this requires more and larger cable trays, which can affect airflow in the data center. As commissioning agents, we have to verify that these are accessible for maintenance and do not adversely affect operation of the data center.

CSE: Discuss how Neher-McGrath calculations have changed or defined a data center’s design.

Lane: At Lane Coburn & Associates, we have a significant amount of experience providing Neher-McGrath ductbank heating calculations for data centers and other mission critical facilities. Heating calculations are recommended for mission critical facilities when large electrical duct banks with large amounts of conduits and conductors are routed in the earth. The heating calculations are performed to determine if any de-rating of the conductors is required. Where an underground electrical duct bank installation uses the configurations identified in the NEC examples, the NEC indicates in section 310-15 (b) that calculations can be accomplished to determine the actual rating of the conductors. A formula provided in the NEC can be used under "engineering supervision" to provide these calculations. This formula is typically not sufficient because it does not include the effect of mutual heating between cables from other duct banks. If the intent is to use native backfill, soil samples and dryout curves testing per IEEE-442 are required to evaluate the actual value of RhoRho to use in the calculations. The average Rho values listed in the NEC have been used in the past, but should not be used for site-specific calculations because it has been our experience that there is no average soil. An evaluation of worst-case moisture content must also be provided. If the engineer has used values that are too low and do not represent the actual value, overheating, thermal runaway, and failure can occur. If the engineer uses overly conservative values, too many conduits will be used, resulting in more cost, more space, and higher fault current levels.

Baxter: Neher-McGrath calculations are not new, but awareness of the need for them continues to spread. The cost benefit of installing large power feeders underground is somewhat offset by the larger conductor sizes and quantities required to support the load at higher temperatures underground. Underground installation is still worthwhile in most instances, but each case should be evaluated separately. The biggest hurdle that Neher-McGrath calculations present to a data center project is schedule; each duct bank must be modeled separately, and none of that engineering work can begin until the materials to be used (native soil, select fill, concrete) have been tested for their thermal resistivity. This process of testing and simulating can delay the start of construction if the project team is not focused on it from the start of design.