In today’s digital age, businesses rely on running an efficient, reliable, and secure operation, especially with mission critical facilities such as data centers. Here, engineers with experience on such structures share advice and tips on ensuring project success in regards to HVAC.

Respondents

Doug Bristol, PE, Electrical Engineer, Spencer Bristol, Peachtree Corners, Ga.,

Terry Cleis, PE, LEED AP, Principal, Peter Basso Associates Inc., Troy, Mich.

Scott Gatewood, PE, Project Manager/Electrical Engineer/Senior Associate, DLR Group, Omaha, Neb.

Darren Keyser, Principal, kW Mission Critical Engineering, Troy, N.Y.

Bill Kosik, PE, CEM, LEED AP, BEMP, Senior Engineer – Mission Critical, exp, Chicago

Keith Lane, PE, RCDD, NTS, LC, LEED AP BD&C, President, Lane Coburn & Associates LLC, Seattle

John Peterson, PE, PMP, CEM, LEED AP BD+C, Program Manager, AECOM, Washington, D.C.

Brandon Sedgwick, PE, Vice President, Commissioning Engineer, Hood Patterson & Dewar Inc., Atlanta

Daniel S. Voss, Mission Critical Technical Specialist, M.A. Mortenson Co., Chicago

CSE: What unique cooling systems have you specified into a data center? Describe a difficult climate in which you designed a cooling system.

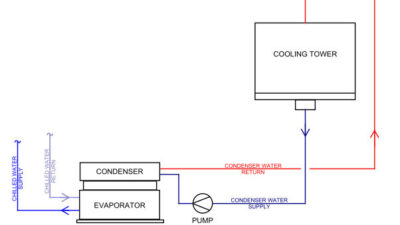

Kosik: One of the more unique systems I have designed is using water to directly cool computers. This approach is most common for supercomputers and other high-performance computing installations. The water is pumped (using secondary and tertiary water loops) directly to heat sinks mounted on the processor (CPU), DIMM (memory), and graphics processing unit. These components internal to the computer can be “cooled” using 100⁰F water. With this temperature, data centers no longer need to use chillers or other compressorized cooling equipment. All that is needed is a cooling tower or similar heat-rejection device. Since last generation’s supercomputers are today’s corporate servers, soon this cooling technique will also lose its unique status. The great thing about this type of cooling system is that it works in most climates because of the high temperature. So even in climates like Singapore, which is hot and humid across the entire year, most of the cooling for the computers can be done without the use of compressors.

Voss: I’ve been fortunate to have cooling systems from traditional chilled-water systems to “swamp coolers” to heat wheel (air-to-air) to heat pipe (air-to-air) used in various data centers across the country. The elevated, hot, dry, and dusty desert area in the state of Washington provided unique issues of how much and how quickly particulate was trapped in the filter systems.

CSE: What unusual or infrequently specified products or systems did you use to meet challenging HVAC needs?

Sedgwick: While there is some consistency in the systems that are used, no two data centers are the same. We’ve commissioned several unique systems, such as a custom-built retrofit using heat pipes to transfer energy between airstreams in heat-recovery systems, infrared sensors controlling temperature on mission critical equipment with a tight temperature tolerance, biometric sensors that look for biological hazards in outdoor airstreams in the event of a biological attack, and packed towers used to remove odors and chemicals from exhaust air.

Voss: With a goal of keeping the PUE as low as possible, the option of and later choice of using a cooling system with a heat wheel (air-to-air heat exchange) was proposed. The owner had done his homework on this option and was fully supportive of incorporating the heat wheel into the final design documents.

CSE: What types of waste-heat recovery, combined heat and power, or other systems have you designed for data centers? Please describe the challenges and solutions.

Voss: A hydronic heat-recovery system using the rejected heat from the data hall was distributed via pipes to the office warm-water heating system. This system used the warmed water and ran it through several variable air volume (VAV) boxes to heat the office and support space. A challenge occurred early on when the actual data hall load was less than the minimum calculated to heat the office space. Fortunately, the design also included electric reheat coils in some of the VAV boxes.

Sedgwick: We’ve commissioned a wide range of these types of systems. For example, in one facility new and retrofit heat pipes (which transfer energy without moving parts) were installed to capture waste energy from exhaust airstreams and transfer it to the incoming airstream. Adaptive humidity control is used in some facilities to allow areas in the data hall to drift within temperature and relative humidity limits while analyzing humidity and temperature requirements based on airflow within the space. It prevents unnecessary humidification or dehumidification since relative humidity can be kept between 30% and 40% by simply adjusting the local temperature slightly up or down. These localized changes leverage normal fluctuations in a data hall to help reduce energy usage. Chilled-water plant management is an approach used in some facilities to improve humidity control in the data hall by controlling waterflow rates. This can be applied in air- and water-cooled chilled-water systems to significantly reduce chilled-water system energy consumption.

CSE: What types of air economizers or other strategies are owners and facility managers requesting in data centers?

Peterson: We’ve seen the trend toward indirect air economizers in most cases. The risk of direct air into the data center is too great in many cases—humidity, smoke, and entrainment of other particulates are too problematic as even one additional server failing can negate all the benefits.

Kosik: Economization clearly continues to be one of the leading energy-reduction strategies for data centers. This is not unique to data centers; air and water economizers are also very effective in reducing energy consumption in commercial buildings (and are required by ASHRAE 90.1: Energy Standard for Buildings Except Low-Rise Residential Buildings). The difference is that data centers use much higher air and water temperatures to maintain required operating temperatures. Being able to use higher temperatures increases the number of hours per year where the economizer can be used. This increase is not trivial; if we compare using a 55⁰F supply-air temperature (typically used in commercial buildings) to an 80⁰F supply-air temperature in a data center, the number of hours the economizer is engaged is increased by 50% to 75%. When water-cooled computers are used, the number of economization hours increases even more when compared with a commercial building application. It must be noted that the reduction in energy use from using economization is highly dependent on the climate where the data center is located. Decision-making is accomplished using a multivariate process—variables include type of economization (air, water, direct, indirect, evaporative), supply-air temperature and moisture content, HVAC equipment type, and location (climate).

Voss: This is a case-by-case situation that is often driven by local building/energy codes.

Sedgwick: There seems to be a push toward using chillers with integrated economizer systems, air handling units that use various types of evaporative cooling, and computer room air conditioning units with free cooling cycles that circulate refrigerant without using a compressor during colder ambient conditions.