Designing solutions for data center clients — whether hyperscale or colocation facilities — requires advanced engineering knowledge

Respondents

- Bill Kosik, PE, CEM, BEMP, senior energy engineer, DNV GL Technical Services, Oak Brook, Ill.

- John Peterson, PE, PMP, CEM, LEED AP BD+C, mission critical leader, DLR Group, Washington, D.C.

- Brian Rener, PE, LEED AP, principal, mission critical leader, SmithGroup, Chicago

- Mike Starr, PE, electrical project engineer, Affiliated Engineers Inc., Madison, Wis.

- Tarek G. Tousson, PE, principal electrical engineer/project manager, Stanley Consultants, Austin, Tex.

- Saahil Tumber, PE, HBDP, LEED AP, technical authority, ESD, Chicago

- John Gregory Williams, PE, CEng, MEng, MIMechE, vice president, Harris, Oakland, Calif.

CSE: What unique cooling systems have you specified into such projects? Describe a difficult climate in which you designed an HVAC system for a data center.

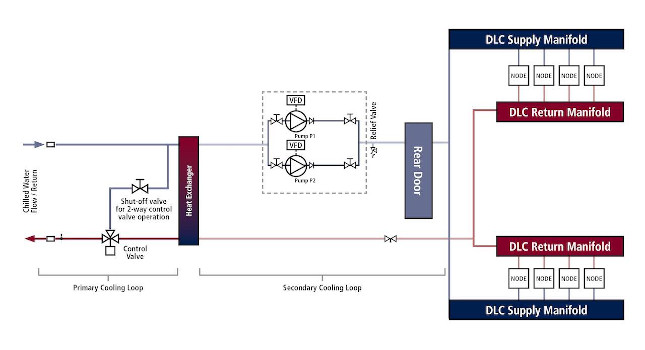

Tousson: Our design team specified direct liquid cooling system that is the latest trend in cooling systems used for supercomputer solutions. The direct liquid cooled system is comprised of a primary loop and a secondary loop. The primary cooling loop is the traditional chilled water system which runs through cooling distribution units. Each CDU has a heat exchanger and redundant circulating pumps. Both the primary and the secondary cooling loops run through the heat exchangers of the CDUs. The secondary loop circulating pumps run the special coolant to the rack cooling door and manifolds for direct loop cooling of each compute node.

Peterson: Designing systems for critical facilities in regions that are warmer presents a unique challenge when aiming for best-in-class efficiency and performance. We have designed with the latest in indirect evaporative cooling in hot and dry climates where the low ambient wet-bulb temperature makes the most of heat rejection. In hot and wet climates, such as southern Florida, we perform an array of comparisons to ensure we met the peak loads and add innovative controls with higher operational temperatures to boost the economizer timeframes beyond the typical limits.

Rener: Where a data center is collocated with a larger office or similar program and uses a chilled water system, the temperatures at which the chilled water system operates are typically reduced based on what the building needs. Instead of designing the data center HVAC systems around this lower temperature, however, the data center with hot-aisle containment can instead be designed to operate using elevated temperatures closer to that of the chilled water return. This approach expands the chilled water system temperature differential and increases chiller efficiency. The approach also supports future trends and emerging technologies. HVAC equipment designed for elevated chilled water temperatures at standard air-cooled rack temperature differentials of 20°F to 25°F will likely be able to support increased temperature differentials up to 40°F if given the same chilled water temperatures needed by the building.

CSE: What unusual or infrequently specified products or systems did you use to meet challenging cooling needs?

Tumber: There are a number of products that have been designed specifically for the data center market. Each project is unique; there is no one-size-fits-all solution. The technologies I have used on data center projects include evaporative cooling (direct and indirect), outdoor direct expansion units that use heat pipe, heat wheel or plate type heat exchanger for economization, liquid cooling, computer room air conditioning units and chillers with pumped refrigerant technology. These technologies have been deployed significantly over the past few years and are no longer considered unusual in the data center industry.

Peterson: As flexibility to meet future cooling densities for data centers we have long been open to grooved piping, as this allows piping additions and modifications on the data center floor while it is operating. Being able to design a data center for liquid cooling anywhere on the floor is a tremendous advantage for the owners who are operating the data center for the next 20+ years.

CSE: How have you worked with HVAC system or equipment design to increase a building’s energy efficiency?

Kosik: One of my areas of expertise is energy analysis for data center projects, primarily in the early planning phases. One of the main goals is to identify, before the design starts, the best options for HVAC systems. Identifying these parameters requires a solid understanding of building energy modeling, data center operation and a deep technical understanding of HVAC systems. Using the output data from the energy analyses we develop prioritizations for energy use, operating expense, reliability, how well the equipment responds to the IT load, footprint, climate impacts, etc. When we look at energy use of the different options there is typically a wide range from least to most efficient. The energy use of the conceptual design alternatives vary based on the simulated operating parameters, equipment efficiency and climate. This is where parametric analysis comes in handy, supplying the essential input and output data to the project team so the owner can make the best decision.

Rener: Instead of designing the data center HVAC systems around a lower temperature chilled water temperature, the data center with hot-aisle containment can instead be designed to operate using elevated chilled water temperatures. This approach expands the chilled water system temperature differential in facilities with a combined program and increases chiller efficiency. For standalone facilities, the elevated chilled water temperature allows for increased economizer operation. If the data center demand far exceeds the demands of the building, then designing the chilled water system with elevated chilled water temperatures tailored to an optimized data center will result in significant savings. Smaller office programs could then operate independently or may use smaller chillers in series with the data center chillers to provide colder water for the office programs during the times of the year they are required. This approach allows the bulk of the cooling load to use elevated chilled water and enhance data center energy performance.

Peterson: When aiming to improve energy performance one of the first things we investigate is the controls schemes. Often the controls of the equipment in and around the data center might be disparate from that of a central cooling plant; by running several modes we can optimize for the best efficiency of the many motors of the holistic cooling system.

CSE: What best practices should be followed to ensure an efficient HVAC system is designed for this kind of building?

Williams: CFD models that integrate thermal management of IT equipment and air flow distribution could be the among the best design practices to use and understand an optimized design. Because the goal of cooling a data center is removing heat from the servers, storage units and the network system, the design efficiency cannot be determined without getting better knowledge of the equipment being developed. And all the equipment needs to meet the specifications. It is typical on a project to struggle to get all necessary design criteria for data center equipment, from basic load to operational temperatures, especially when these spaces are co-located and the developer may not have complete control of the installation.

Rener: Best practices assess the needs of the building and data center HVAC design independently for improved energy efficiency. Once this systems and approaches are understood for optimum energy efficiency, these system concepts are then studied together in light of their characteristics. A data center dominated environment likely has a different solution to that of a smaller data center within a larger office or other program. Once the needs of each system and relative cooling demands are understood, they can then be studied as to the best way to combine in a common facility.

Peterson: Decades ago, data centers were implementing hot and cold aisles to improve air flows; today air containment has been an industry norm to separate supply and return air. This allows variable speed fans to supply the air that is needed and not waste energy trying to prevent air mixtures issues.

CSE: What type of specialty piping, plumbing or other systems have you specified recently? Describe the project.

Peterson: The return air temperature in a recent data center design was high enough for us to revisit the fire protection systems, how they are activated and impact when they are used. Using a clean agent suppression system allows any fire, no matter how early, to be interrupted while protecting the IT equipment from residue, conduction or corrosion of other methods.

CSE: What are some of the challenges or issues when designing for water use in such facilities?

Peterson: Often data center owners and operators are averse to water systems in or above the IT equipment spaces. We understand the desire to avoid the risk of possible leaking around energized equipment and we can design accordingly, with piping underfloor, around the space with protective barriers or kept entirely out of the IT equipment space.