An increasingly data-driven society demands advanced, high-performance data center facilities. Read on to learn the biggest challenges, emerging technologies and upcoming trends affecting data centers.

Respondents

David Anderson, PE, LEED AP

Senior Mechanical Engineer, Principal

Phoenix

Drew Carré, PE

Senior Electrical Engineer

Ambler, Pennsylvania

Terry G. Cleis Jr., PE, LEED AP

Vice President

Troy, Michigan

Matt Koukl, DCEP-G

Principal Project Manager, Mission Critical Market Leader

Madison, Wisconsin

Brian Rener, PE, LEED AP

Principal

Chicago

Saahil Tumber, PE, HBDP, LEED AP

Technical Authority

Environmental Systems Design Inc.

Chicago

CSE: What’s the biggest trend in data center projects?

David Anderson: We’re seeing increased heat load density and more processing load in less floor area. Owners and developers are requesting design teams to maximize their capital cost investments while keeping operating costs to a minimum with the ever-increasing heat load density.

Drew Carré: The biggest trend I have noticed is the reduction of capital and operational costs. This has driven a lot of systems/equipment to become more efficient and less costly to purchase. For uninterruptible power supplies, this includes removing isolation transformers and using more efficient electronics or topologies. Modular systems have gained popularity, allowing for the UPS to more easily grow with the load and avoid running the systems at low loads and pulling down overall efficiencies. This also helps reduce the upfront cost for the UPS and batteries.

Terry G. Cleis Jr.: We’re providing systems that are flexible and can accommodate varying rack power and cooling requirements. This can occur either at select locations in a data center or throughout an entire data center. The first step is to determine the owner’s current and potential future equipment requirements, then use this information to establish the design parameters for the overall space. The overall infrastructure needs to be designed to accommodate the maximum equipment power and cooling requirements.

The design must also include appropriate cooling and power distribution to support the higher demands of equipment at the designated racks. In the past, most equipment could be kept cool by keeping the room cool and the power consisted of fairly standard branch circuits and devices. Newer equipment often requires cooling that is targeted at the equipment and significant power and associated distribution at each rack.

Matt Koukl: We’re seeing standardization and modularization. With the speed of facility deployment, systems need to be standardized and modular to allow for rapid scale and fast deployment of various mechanical, electrical and plumbing systems. Manufacturers that offer volumetric prefabricated systems can help those entities deploy systems and space at a rapid rate to meet aggressive schedules. While these systems may cost more, the benefit to the schedule seems to justify the costs.

Saahil Tumber: If I had to pick one, the biggest foreseeable trend is the continued growth of the data center market and the impact of data centers on our daily lives. The growth is being heavily driven by cloud computing, artificial intelligence, virtual reality, the “internet of things,” social media, networking platforms, cryptocurrency, autonomous vehicles, e-commerce and 5G networks, among other factors. All segments of the industry have ambitious goals for the near future and we expect rapid deployment of data centers to support these segments.

CSE: What trends do you think are on the horizon for data centers? Please discuss co-location facilities, hyperscale data centers and other types.

Carré: Enterprise data center users will continue to shrink their facilities and move processes/equipment to off-site locations whether it be co-location facilities (happening now) or hyperscale data centers (happening in the future). Only the processes that are required to be in-house will be maintained. Co-location has a lot to offer, as owners are no longer looking to invest in large facilities and equipment and the associated costs.

The hyperscale facilities will allow this to continue on a grander scale. Depending on the business involved, being in these facilities can allow for greater connection to the outside world via multiple providers, which would be expensive to provide.

Tumber: Data center providers are evaluating their business needs for the first half of the next decade. Enterprise and hyperscale segments are developing their next-generation data center designs to keep pace with their evolving requirements. This involves revisiting their design criteria, resiliency requirements, sustainability goals and the like and testing new technologies for implementation starting in 2020. The co-location segment is continuing to focus on improving metrics such as power usage effectiveness, cost/megawatt, speed to market, etc., and incorporating strategies to reduce stranded capacity and space.

Koukl: Co-location and hyperscale facilities will continue their development and edge facilities will further develop for various content delivery and network latency reduction requirements. When it happens, edge facilities will be critically important to support the implementation of 5G and further growth and adoption of autonomous vehicles. With these technologies driving to have content delivered and captured at the edge, local infrastructure will be needed to quickly process and respond to the various data requests.

Anderson: We’ll be seeing the use of nonraised floor computer rooms. With the increased heat load density on the computer room floor, there’s so much more airflow; we’re able to provide cooling without a raised floor. There is still a need to get the cooling where you need it in the room, adding complexities to making the system work effectively.

CSE: Each type of project presents unique challenges — what types of challenges do you encounter on projects for data centers that you might not face on other types of structures?

Koukl: Data centers are unique due to heavy plug load not commonly exhibited in other types of facilities. The plug loads can range from a few kilowatt/cabinet, upward of multiple hundreds of kilowatts per cabinet. Admittedly, the latter are very specialized systems, but it takes past experience with these special systems to understand the unique characteristics for power and cooling system to ensure proper operation.

Anderson: Ever-changing owner project requirements. As the owner or developer attempts to sign on agreements with their prospective customers, each with unique specifications, owner requirements are pushed and scope changes requested or adjusted on a weekly basis. These requests can continue even through construction. The challenge is not over-designing the structures systems and overspending the owner’s capital investment, while still providing the requested changes to meet their customer’s requirements.

Cleis: Designing a data center inside of an existing facility can be particularly challenging. It can sometimes be difficult just to find the appropriate location in a facility. The location wants to be somewhat hardened and protected from incidents that are generated within the facility or from outside of the facility.

For example, avoiding the highest floor in a building (roof leaks or extreme weather events), avoiding floors that are below-grade (below-grade water infiltration) or avoiding spaces with access to the exterior windows or doors (intrusion prevention or wind generated weather events), while at the same time providing desired adjacencies to building utilities and services that are required to support the functions with the data center. Additionally, for some clients this location also may want to be indiscrete, while still accessible to the people that work on and support the equipment within the space.

CSE: Tell us about a recent project you’ve worked on that’s innovative, large-scale or otherwise noteworthy. Please tell us about the location, systems your team engineered, key players, interesting challenges or solutions and other significant details.

Brian Rener: We recently completed designs for a new supercomputing center that will support artificial intelligence as part of a new computer science building for the Milwaukee School of Engineering. There were challenges to incorporating and highlighting a supercomputing data center within a higher education building. The supercomputer and necessary heating, ventilation and air conditioning systems required more than 50 percent of the building’s energy consumption while only occupying 5 percent of the floor area. Using hot-aisle containment allowed for a clean aesthetic of the space visible through the glass walls from the corridor and also allowed for a more efficient airflow and cooling strategy. The supply air to the space is being delivered at an elevated temperature, allowing for a more efficient chiller operation and more free cooling hours

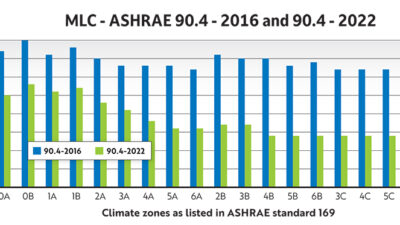

Tumber: I am working on a hyperscale data center project in the Northwest. It involves four data center buildings with a combined capacity in excess of 70 megawatts. The ASHRAE climate zone is 5B, which is conducive for economization and evaporative heat rejection. The project was challenging for numerous reasons. Major modifications were required to the owner’s baseline design due to site constraints. We had to work closely with equipment manufacturers and design custom equipment for the project due to unique requirements. The collaborative effort lasted several months. Also, the owner requirements changed considerably, which voided the original master planning for the site. It necessitated strategic modifications to existing infrastructure without impacting the live data centers.

CSE: How are engineers designing data centers to keep costs down while offering appealing features, complying with relevant codes and meeting client needs?

Carré: One way to achieve cost effective design solutions is to provide redundancy without providing double the equipment. Catcher UPS and generator systems allow data centers to run equipment to 66 percent capacity, which will also provide for better efficiencies than running a 2N system at 45 to 50 percent load. Lower battery run times have also become a trend of late. In the past, 15 minutes was typical, however, now our team is often specifying a 6- to 9-minute range. As lithium ion continues to develop a greater savings will be achieved through minimized battery space and structural requirements along with potentially longer life than a valve-regulated lead-acid battery.

Tumber: It begins with engineers having a firm understanding of the project goals and objectives, so they can recommend tailored solutions and set expectations. Engineers also need to be cognizant of the interdependencies between cost, scope, quality, safety and schedule. For example, if cost exceeds expectations, then other criteria such as scope needs to be potentially relaxed. Basically, controlling costs on a project requires collaboration from all stakeholders. Tradeoffs are frequently necessary and engineers need to drive that effort.

CSE: How are data center buildings being designed to be more energy efficient?

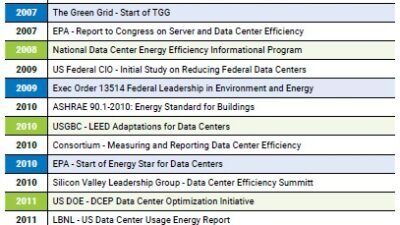

Tumber: Designing an energy-efficient data center requires a holistic approach and there are multiple strategies that can be leveraged, such as judiciously selecting the data center site where possible to benefit from ambient conditions, expanding the thermal envelope to increase hours of economization, implementing heat recovery systems where feasible, utilizing advanced UPS technology (such as transformer-free UPS, eco-mode of operation, etc.), utilizing high-efficiency transformers and power distribution units, reducing voltage transformation steps, optimizing controls and sequence of operations and optimizing information technology infrastructure (via consolidation, virtualization, decommissioning etc.).

Koukl: Data centers are becoming more efficient with various equipment vendors developing more innovative products that provide energy use reduction strategies. Recently it seems various vendors have turned to using various forms of refrigerant economizers to further support economization.

Another method that is and will continue to gain traction is direct to chip cooling whether it is water or some other form of liquid. This has been talked about for decades but with central processing units and graphics processing units approaching a thermal limit that will require more U-space to cool with air, a different approach is needed and will be required.

Rener: Elevated temperatures, hot aisle containment, energy recovery and air and water side economization.

Carré: Separating equipment that requires air-conditioned rooms from equipment that only requires ventilation will reduce HVAC costs without any adverse effects on equipment. Keeping as much heat-producing equipment off the raised floor can achieve similar results. Common techniques being utilized include aisle containment to prevent cooling the entire space when only portions of the space need the cold air and using HVAC equipment that communicates so that all units are operating together and not working against each other. Mechanical systems that can ramp up/down via variable frequency drives are also able to reduce the load and more efficiently run at lower loads but allow for higher capacities as the load grows.

CSE: What is the biggest challenge you come across when designing data centers?

Cleis: Determining the appropriate levels of redundancy and reliability for each system that supports the data center, then determining the best system options required to provide this redundancy and reliability. This includes energy costs, first cost of equipment, cost for preventive maintenance, replacement costs, life expectancy of equipment and ease of maintenance.

This often requires the design professional to be the communication bridge between the owner’s various stakeholders. These stakeholders include those concerned with the financial portion of the project who want to ensure that the capital is assigned appropriately, the facility engineers who are tasked with maintaining the critical cooling and power support systems and the technical staff who work on the computing equipment inside the space that want the systems to perform to suit their needs.

Tumber: One of the biggest challenges is identifying and agreeing on the project requirements. Unlike other projects, there are myriad stakeholders in a data center project such as facility engineering, facility operations, finance, procurement, security, real estate, IT and others. The stakeholders will have different and occasionally contradictory interests and objectives. Designing a data center that satisfies all individuals can be challenging. Collaboration is critical to ensure the team works toward a common goal. We typically see more collaboration among the internal teams of hyperscale clients.

Carré:The biggest challenge currently is the direct and indirect effects of trying to minimize the space required for the infrastructure and maximize the space available for IT equipment. This is largely driven by co-location-type facilities. As the space for infrastructure is reduced, greater coordination between the trades, architect and engineer are required. Early and often communication can solve many of the challenges and accurate three-dimensional modeling can also be a helpful tool. Additionally, space allocated for future equipment must be able to not only accommodate the future equipment but be flexible enough to work with several vendors and potential capacities.