ASHRAE 90.4 is a new energy standard for data centers that calls for meeting minimum efficiency requirements.

Learning objectives:

- Explain ASHRAE 90.4: Energy Standard for Data Centers.

- Explore the performance requirements of ASHRAE 90.4 versus the prescriptive requirements of ASHRAE 90.1-2007, addendum bu.

- Calculate energy efficiency in data centers.

When addendum “bu” was added to ASHRAE 90.1-2007: Energy Standard for Buildings Except Low-Rise Residential Buildings in 2010, it caught the data center industry off-guard. While data center operators had a considerable amount of representation on the ASHRAE TC9.9: Mission Critical Facilities, Data Centers, Technology Spaces and Electronic Equipment technical committee that shapes the environmental standards for data centers, the ASHRAE 90.1 Standing Standards Project Committee (SSPC 90.1) was something else altogether. This addendum added significant prescriptive requirements to ASHRAE 90.1 for air- and water-side economizers in data center HVAC systems. Up until that point, data center HVAC systems were effectively exempt from energy code requirements. The major players in the data center industry reacted strongly to these changes and the resulting firestorm served to help shape future standards development, namely ASHRAE 90.4-2016 Energy Standard for Data Centers.

What was 90.1-2007 addendum bu?

Addendum bu added a new definition to ASHRAE 90.1: “computer room.” This definition was as follows:

A room whose primary function is to house equipment for the processing and storage of electronic data and that has a design electronic data equipment power density exceeding 20 W/sq ft of conditioned floor area.

This definition has been altered substantially in recent versions of the code, which will be discussed.

The major change attributed to addendum bu was the addition of economizer requirements for cooling systems with fans that serve computer rooms. This more or less aligned data centers with requirements for HVAC systems in other types of buildings and affected sections 6.4.1.1, 6.5.1, and Table 6.8.1H within 90.1-2007. There were several exceptions to the economizer requirement including when:

- The total combined design load of all computer rooms in a building is less than 3,000,000 Btu/h (250 tons) and not chilled-water cooled.

- If chilled-water cooled, the room design load is less than 600,000 Btu/h (50 tons).

- Less than 600,000 Btu/h (50 tons) of computer room cooling is being added to an existing building.

- The authority having jurisdiction (AHJ) does not allow cooling towers.

- Where at least 75% of the design load is critical in nature (i.e., NEC Article 708: Critical Operations Power Systems, Tier IV data centers, data centers that process financial transactions, etc.).

- The economizer provision affected every climate zone except for zones 1A and 1B (in the United States, typically only tropical regions, such as the southern tip of Florida, Puerto Rico, Guam, and Hawaii are in these climates zones).

Why did the data center industry object to bu?

While the foreword to the addendum clearly outlined ASHRAE’s justification for the new requirements and exceptions contained in it, the data center industry still had unusually strong objections. The primary issue was that the requirements were “prescriptive,” mandating specific design solutions. The data center industry’s argument was that in a product sector experiencing explosive growth/change, they should not be limited with prescriptive requirements that stifle technical innovation. Rather, the preference was that the requirements be performance-based and focus solely on quantifying energy efficiency, not the exact methods used to achieve that efficiency.

The data center industry’s most public response to addendum bu was published on Google’s public policy blog in April 2010. Google traditionally reserves use of this widely read forum for official commentary on privacy, net neutrality, anti-trust, and similar major regulatory topics. This open-letter response was signed by the who’s who of the data center industry. ASHRAE did issue a formal response to the open letter, maintaining that the new exceptions in combination with alternative compliance paths, such as the energy cost budget method, reasonably addressed those concerns. By this point, however, the lines had already been drawn between the two groups.

Why did efficiency requirements suddenly apply to data centers?

Like all new codes and standards, nothing happens quickly. Changes are generally the result of a long, deliberate sequence of events. ASHRAE has long considered addressing data center energy efficiency in ASHRAE 90.1. In August 2007, the EPA issued a report on data center energy efficiency to the U.S. Congress. The key takeaway from this report was that the nation’s data centers were responsible for about 1.5% (61 billion kWh) of total US electrical consumption in 2006. The report further forecasted that this use would double by 2012. Now with the issue quantified, the wheels of change could be set in motion. Given this dramatic projected increase in energy usage, adoption of new energy efficiency requirements would be justifiable per the key provisions of federal energy efficiency legislation in effect at that time, the Energy Policy and Conservation Act of 1975 (EPCA) and the Energy Policy Act of 1992 (EPAct).

Those projections ended up being wrong. Based on a Lawrence Berkeley National Laboratory report issued 9 years later in 2016, usage did not increase nearly as dramatically as predicted. Instead of doubling, data center electrical usage was estimated at about 1.8% (70 billion kWh) of total 2014 U.S. electrical consumption. This represents only a 15% increase from 2006 levels. Ironically, the forecasting error is mostly attributed to the emergence of hyperscale/cloud data centers—which include many of the signatories to Google’s open letter who have since embraced forms of air and water economizers in a significant percentage of their data centers. Google, in fact, has managed to reduce their fleetwide trailing 12-month power-usage effectiveness (TTM PUE) in 2016 to 1.12, even when using fairly conservative metrics.

The emergence of ASHRAE 90.4

Traditionally, energy codes have addressed only HVAC systems used for comfort cooling and heating and left out requirements for systems related to process cooling/heating. With the aforementioned conflicts over new data center efficiency requirements starting with the addendum to ASHRAE 90.1-2007, members of the technical committee for TC9.9 initiated a new standard in response to those perceived shortcomings. After almost 5 years of work, the result was ASHRAE 90.4-2016: Energy Standard for Data Centers.

Even though ASHRAE 90.4 was a consensus standard with input from numerous industry groups, there was still significant heated debate, specifically over minimum acceptable efficiency levels and associated measurement metrics. One of the first hurdles was agreeing on a formal definition for a “data center”. As mentioned previously, addendum bu had added an unusually broad definition for a “computer room.” Based on that earlier definition, there would be no useful distinction between an intermediate distribution frame (IDF) closet in an office building and a large, hyperscale data center. The proposed addendum cs to ASHRAE 90.1-2010 laid the groundwork for ASHRAE 90.4 by attempting to revise the definition of computer rooms. That addendum also introduced a new definition, “data center,” to which ASHRAE 90.4 now applies. Those two definitions have since been revised in subsequent addenda to ASHRAE 90.1. The final definitions as incorporated into ASHRAE 90.4 make a distinction between these two occupancy types by adding power usage thresholds for each. The revised definition in ASHRAE 90.4 for a computer room is as follows:

A room or portions of a building serving an information technology equipment (ITE) load less than or equal to 10 kW or 20 W/sq ft or less of conditioned floor area.

A data center is defined by ASHRAE 90.4 as follows:

A room, building, or portions thereof, including computer rooms being served by the data center systems, serving a total ITE load greater than 10 kW or 20 W/sq ft of conditioned floor area.

This distinction is important. ASHRAE 90.1 still applies to computer rooms but does not apply to the mechanical and electrical distribution systems in data centers. However, ASHRAE 90.1 still applies to other portions of data centers, namely envelope, service-water heating, and lighting. Neither standard applies to mechanical and electrical equipment in telephone exchanges or essential facilities (i.e., NEC Article 708 Critical Operations Power Systems, Tier IV data centers, data centers that process financial transactions, etc.).

Efficiency is meaningless if you can’t calculate it

A primary point of contention during the writing of ASHRAE 90.4 was how to quantify minimum efficiency requirements. The Green Grid Association, a consortium of data center industry companies that works to improve the efficiency of data centers worldwide, developed a metric known as power usage effectiveness. PUE is a ratio that quantifies the relationship between the energy specifically used by ITE and the total energy used by a data center. It is the most widely accepted efficiency metric in the industry. However, it has a few shortcomings:

- It indicates nothing about the efficiency of the IT equipment itself. Since it does not consider productivity (how much compute processing capability per unit of data center input power or percentage usage of computer server equipment), underused IT equipment can skew the energy profile of the overall facility.

- While it is useful in providing insight to how energy usage changes in response to changes in a data center’s infrastructure (deployment of new servers, changes to how air conditioning equipment is operated, etc.), it is based on actual measured energy usage of an active data center. It can change dramatically based on changes in uncontrollable factors (i.e., server use, weather, etc.). It is not especially useful for theoretical baseline calculations performed during the initial design of the data center.

- Depending on the Green Grid level of metering (where or how it is measured), you may not capture all measured energy consumption in a way that is directly comparable to otherwise similar facilities.

The ASHRAE 90.4 committee initially tried, during early draft revisions of the standard, to apply a concept that they also called PUE, but potential confusion over comparisons to the Green Grid version of PUE eventually led to the elimination of PUE nomenclature from ASHRAE 90.4.

In place of PUE, ASHRAE 90.4 brought forth two new metrics, electrical loss component (ELC) and mechanical load component (MLC). A conscientious decision was made to address mechanical system and electrical distribution system efficiencies separately. The ultimate goal of separating the two was to provide more design flexibility. If either the mechanical or electrical systems didn’t meet the minimum efficiency level required by the standard, an alternative compliance path could be used where trade-offs would be allowed. For example, if replacement of a major portion of the electrical distribution system (i.e., deployment of a new larger uninterruptible power supply; UPS) triggered a compliance requirement for an existing facility, replacement of an otherwise functional existing mechanical system that didn’t meet the minimum required efficiency could potentially be avoided by an offsetting increase in the efficiency of that new electrical distribution equipment. The associated minimum-efficiency threshold levels were structured to effectively create an 80/20 policy where, after trade-offs are considered, generally only the bottom 20% of existing facilities will be forced into upgrades.

Electrical loss component

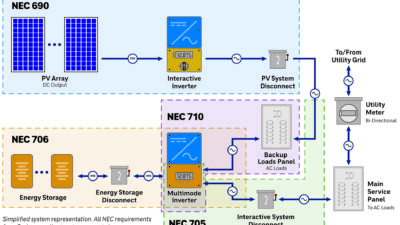

ELC quantifies the inefficiencies/losses of different parts of the electrical distribution system, from the utility service entrance all the way through to the receptacle at the ITE cabinet. The ELC value is the percent loss (i.e., a 75% overall efficiency equates to an ELC of 0.25). There are three distinct segments of this power path defined by ASHRAE 90.4 as follows:

-

Incoming electrical-service segment (from the utility service disconnect/demarcation to the UPS input)

-

UPS segment (limited to the UPS equipment and any associated paralleling gear for multimodule designs)

-

ITE distribution segment (from the UPS output to the end of the branch circuit at the point of use/receptacle including all transformers/power distribution units, remote power panels, busduct, branch conductors, etc.)

The focus here is solely on the portion of the electrical distribution system that delivers power to the data center ITE load. If multiple paths to the ITE exist, the pathway with the greatest losses needs to be used in the calculation. However, losses attributed to the portion of the electrical distribution system serving associated supporting systems (computer room air conditioning units, chillers, lighting, etc.) are not included in ELC. Also, emergency/standby generator systems that are normally “off” are not considered as part of ELC calculations.

The ELC calculation for the UPS segment also recognizes that efficiency is affected by the physical size of the UPS system and by the level of redundancy. For example, it is expected that in a fully redundant 2N UPS system, neither UPS will be loaded to more than 50% under normal operating conditions. A lightly loaded UPS will generally be less efficient than a more heavily loaded UPS with less redundancy. As such, maximum allowable ELC values as detailed in tables 8.2.1.1 and 8.2.1.2 include the following considerations:

- Is total ITE design load either less than or greater than 100 kW?

- Is UPS system configuration single-feed (N, N+1, etc.) or dual-feed with two distinct output busses (2N, 2N+1, etc.)?

ELC calculations must be made at two different load points (100% and 50% of the load expected at each UPS). For example, ELC calculations for a 1N UPS would be made at 100% and 50% and those for a 2N UPS would be made at 50% and 25%.

Mechanical load component

MLC takes a slightly different calculation approach than ELC. MLC is the sum of all data center HVAC equipment power usage (including humidification, if present) divided by the baseline ITE design power. Unlike ELC, where the overall system is subdivided into three parts, the distinction is de-emphasized between individual data center HVAC system components. Rather, the overall power usage of the data center’s HVAC system is the key metric here. The only consideration is that the maximum acceptable MLC value changes depending on the climate zone in which the facility is located. This may make certain HVAC technologies that are more effective in certain climate zones more attractive. Regardless, this focus on the system rather than the individual components effectively makes MLC performance-based and not prescriptive, which addresses some of the intense criticism directed at the addendum bu for ASHRAE 90.1.

To give the design team more flexibility, there are two MLC compliance paths—power and energy. ASHRAE 90.4 does not mandate that one or the other be used in specific situations. Rather, the decision concerning which path is more appropriate is left to the design team. The power-compliance path calculates peak MLC at both 100% and 50% ITE design loads (kW). The energy path calculates annualized MLC at both 100% and 50% ITE design loads (kWh). Ultimately, the determining factor for which path is more appropriate lies in whether seasonal variability in weather at the data center location will hurt or help overall HVAC system efficiency.

How existing facilities are addressed

The very first question that many clients will nervously ask is if ASHRAE 90.4 applies to their existing data center. The answer is a definite “maybe.” Since this is a new standard, it is unclear exactly how individual AHJs will interpret and apply it. Some of the language, especially as it pertains to alterations, is somewhat confusing. However, ASHRAE 90.4 does attempt to make a distinction between new data centers, additions to existing data centers, and finally, alterations to existing data centers. The applicability is as follows:

- All provisions of ASHRAE 90.4 apply to new data centers.

- ASHRAE 90.4 applies to an addition only if it increases area or connected load by 10% or more.

- Alterations shall comply, provided that compliance will not result in the increase of energy consumption for the building. An alteration is defined as “replacement not in kind.”

When will ASHRAE 90.4 take effect?

SSPC 90.4’s original goal was to have the issuance of ASHRAE 90.4 to coincide with the release of ASHRAE 90.1-2016. However, after the first public review of ASHRAE 90.4, more than 600 comments were received in 45 days. While the sheer volume of comments was not totally unexpected, it did slow down the adoption process. Per the ANSI requirements for a consensus-based standard-making process, any issues raised during the public commentary period must be formally addressed prior to acceptance of the standard. Ultimately, this meant that final approval was delayed until mid-2016.

While placeholders referencing ASHRAE 90.4 were incorporated into ASHRAE 90.1-2016, it is unclear if ASHRAE 90.4 will be adopted by the International Code Council (ICC) during the current code-development cycle for inclusion in the 2018 International Energy Conservation Code (IECC). Proposals for inclusion by references were brought before the ICC 2016 Group B Committee last year, but were rejected. The reason for rejection wasn’t necessarily because of any inherent flaw in ASHRAE 90.4. Rather, the standard was new and had not been reviewed. Regardless, if the prevailing energy code in a particular jurisdiction is IECC, it is questionable that ASHRAE 90.4 will be enforced any time in the immediate future for those locations.

Research fact:

62% of engineers identified energy efficiency to be a critical challenge affecting the future of HVAC systems in data centers. Source: Consulting-Specifying Engineer 2015 HVAC and Building Automation Systems Study

John Yoon is a lead electrical engineer at McGuire Engineers Inc. and is a member of the Consulting-Specifying Engineer editorial advisory board.