ASHRAE Standard 90.4: Energy Standard for Data Centers guides engineers in designing mechanical and electrical systems in data centers.

Learning objectives

- Become familiar with ASHRAE Standard 90.4-2016: Energy Standard for Data Centers.

- Learn how to define data center energy use.

- Calculate energy-reduction options within data centers.

In 1999, a prescient article authored by Forbes columnist Peter Huber titled "Dig More Coal—the PCs Are Coming" focused on energy use attributable to the internet. For one of the first times, the topic of data center energy use was introduced in a major mainstream publication.

Nearly 20 years later, the industry is still on a quest, diligently working on tactics and strategies to curb energy use while maintaining data center performance and reliability. During this time, dozens of cooling and power system designs have been developed to shrink electricity bills from energy efficiency improvements. Building on these advances, manufacturers are now producing equipment, purpose-built specifically for use in the data center. For example, HVAC engineers were mostly limited to designing around standard commercial cooling equipment, generally not able to meet the demands of a critical facility.

As the industry matured (domestically and globally), efforts ramped up to reduce energy use in data centers and other technology-intensive facilities. These programs, while different in scope and detail, all had a similar goal: develop noncompulsory, consensus-driven best practices and guidelines on optimizing data center energy efficiency and resource use. It was truly a watershed moment as these programs manifested into actual design and reference documentation, providing vital access to data on design and operations; prior to this time, finding consistent, verifiable instruction on how to improve data center energy efficiency was not easy. Today, worldwide, these documents are numerous and come from diverse sources.

When organizations such as ASHRAE are developing official standards, the current state of the industry is certainly taken into consideration in an attempt to avoid releasing language that is overly stringent (or too lax), possibly resulting in unintended outcomes. Undoubtedly some of the grass-roots best practices and design approached that were already in place ultimately influenced modifications to the commonly used energy efficiency standard, ASHRAE 90.1: Energy Standard for Buildings Except Low-Rise Residential Buildings, to create a data center-specific energy standard.

As building and systems technology has changed over the years, ASHRAE has been able to respond by continuously refining its approach on defining energy efficiency. For more than 40 years ASHRAE has consistently developed a widely used process for determining compliance for commercial buildings. However, determining compliance for data center proves to be more challenging, due to the exceptional nature of the facility’s electrical consumption and rigorous reliability requirements; this drove to the creation ASHRAE Standard 90.4-2016: Energy Standard for Data Centers.

Increasing the urgency to develop a data center energy standard is the rapid proliferation of massive data centers, causing logistical difficulties to identify, develop, and release a new standard as complex as ASHRAE 90.1. Every new release of Standard 90.1 (since 2010) builds on the previous release, providing more instruction and metrics for developing energy-consumption compliance specific to data centers.

Tackling energy use: Looking ahead

As hyperscale data centers and cloud computing flourishes, energy efficiency and operating costs for data centers continues to be a fundamental concern to owners and operators. For example, Cisco Cloud Index provides analysis of cloud and data center platforms and predicts that, by 2020, traffic within hyperscale data centers will quintuple, representing 53% of all data center traffic.

Hyperscale data centers are designed to be highly scalable, homogenous, and highly virtualized. These data centers also tend to have elevated computer equipment densities (measured in watts per square foot or kilowatts per server cabinet) and above-average overall power demands (measured in megawatts). This is not just for new data centers-existing data centers, retiring end-of-life servers, storage, and networking equipment can often end up with a net-positive electrical load, requiring greater amounts of power as compared with the computer systems prior to upgrade.

Part of this has to do with being able to fit more hardware in the place of the displaced equipment. So even if the individual server, as an example, has a smaller power demand than its predecessor, the total load will increase due to the increased number of servers.

The phrase "next-generation computing" may evoke a feeling of massively powerful, autonomous computers. That isn’t too far from reality, especially when talking about supercomputers and other high-performance computing systems. These computing platforms are set apart from other systems by the ability to solve extremely complex and data-intensive problems.

For larger systems, data-throughput speed and sheer computational muscle require a peak power demand unlike any commercial computing platform; it is not unusual to see server cabinets rated from 80 kW to well over 100 kW (compared to a corporate data center where the power demand per server cabinet will generally range from 5 kW to 20 kW). These leading-edge machines are purpose-built and use proprietary power distributions and cooling systems. And these machines will run at peak power for weeks or months when processing particularly intensive jobs. Due to the massive power demand and associated energy use, the supercomputing community has placed a high priority on efficiency as it applies to computer performance. For example, The Top500, a data base of end user-supplied performance data, tracks the 500 most powerful commercially available computer systems. A subset of The Top500, the Green500, ranks the top 500 most efficient supercomputers in the world.

Understanding that supercomputers are built for specific uses, especially at the upper end of computational power, there are few similarities amongst the different machines. To address this, the Green500 list is created using the performance-per-watt efficiency metric; this uses the metric floating point operations per second (FLOPS) and the power measurement in watts, resulting in FLOPS per watt. This normalizes the different platforms and system sizes, allowing a valid comparison of supercomputing efficiency. While there are certainly other performance tests for supercomputers, the FLOPS-per-watt metric is particularly useful for building services and energy engineers.

Early metric development

Over the years, a lot of effort and specialized knowledge has gone into the advancement of energy efficiency in data centers. The formation of some of the most influential organizations began close to 2 decades ago, subsequently building up considerable membership bases. Engineers, scientists, researchers, the U.S. federal government, manufacturers, professional organizations, and many others were the driving force behind establishing these organizations.

An interesting aspect of these associations is that the membership will typically have varied reasons for wanting to develop energy efficiency goals. The diverse mix of participants encouraged debate and discussion, which is a big reason why much of the material published was well-received and is still relevant many years later. Also, the organizations did not operate under the same rules: some were top-down (like the federal government entities) and some were bottom-up (like engineering and professional societies).

One of these organizations, The Green Grid (TGG), is at the forefront of promoting data center efficiency. TGG also has a diverse membership base, so the information generated by TGG consists of varied topics, applicable to different disciplines, but all still centered around data center efficiency.

TGG released the seminal white paper in 20017, "Green Grid Metrics: Describing Datacenter Power Efficiency." This paper formally introduced power-use efficiency (PUE), described as a short-term metric to determine data center power efficiency, derived from facility power measurements:

PUE = (total facility power)/(IT equipment power)

At that time, TGG’s stated purpose in developing the paper was to "enable data center operators to quickly estimate the energy efficiency of their data centers, compare the results against other data centers, and determine if any energy efficiency improvements need to be made." (In subsequent white papers, TGG acknowledged that they no longer recommend comparing two data centers based on PUE results because there are many attributes of data center location, reliability level, engineering, implementation, and operations that affect PUE.)

Regardless of TGG’s caution on misapplying PUE by comparing different data centers, TGG also acknowledged that many organizations in the data center industry have already begun to compare PUEs among data centers, which raises further questions around how to interpret the results. Organizations are realizing that using PUE as an energy-efficiency yardstick has some drawbacks, exacerbated by the fact that there are various ways to calculate PUE and stakeholders in the industry have expressed concerns about the consistency and repeatability of reported PUE measurements.

Ironically, at the same time PUE was gaining global acceptance, the data center industry was becoming more sophisticated and began requiring not just a metric, but a more detailed, robust process that evaluates conformity to data center energy-use consensus standards.

Today, PUE is one of the most widely used metrics for determining efficiency in data centers. While extremely valuable for calculating and benchmarking efficiency in data centers, PUE was never meant to evaluate proposed data center designs against a standard, especially when different cooling systems, economizers, and climates are part of the evaluation. Even though it would be several years before the release of ASHRAE Standard 90.4, PUE, other related metrics, and informal design practices would continue to be used as unofficial proxies for the yet-to-come formal process used in the ASHRAE standard.

A new standard for defining energy-use compliance

From the early 2000s to the present, data center organizations, state governments, electrical utilities, and research institutions published myriad best practices, design guides, white papers, etc. on data center energy efficiency. Over this time period, the primary vision didn’t change drastically; it remained imperative that strategies for data center design and construction include a solid vision and plan on how to maximize energy efficiency and minimize negative impacts on our natural resources. Ensuring optimization of energy use (and overall reduction) for data centers can only come from strong advocacy and the continued development of comprehensive energy standards and codes.

Commercial buildings in the U.S. and abroad are designed using standards, guidelines, and codes that include requirements for energy efficiency. ASHRAE 90.1 is an indispensable and widely referenced standard for this, used for design, documentation, and compliance. It has proven to be very effective in reducing energy use in buildings dating back to 1976. But with the proliferation of data centers that consume unprecedented amounts of energy, it became clear that Standard 90.1 could not address certain aspects of data center design vis-à-vis protocols for system type, reliability, electrical system efficiency, etc.

For example, ASHRAE 90.1 contains a process on how to model a computer room, but this language is intended for a small data processing room within a larger corporate building, which is very different from a stand-alone data center with a small amount of administrative space.

Developing a new standard absolutely needed to be industry-backed, formed using a consensus-driven process, and exclusively for data centers. Given its widespread use and long history, the logical platform was the existing ASHRAE 90.1. Using ASHRAE 90.1 as a basis had another key advantage: municipalities can adopt and codify it for inclusion in their local building code.

The release of ASHRAE 90.4-2016

After several years of development, in 2015 ASHRAE released drafts of the 90.4 Energy Standard for Data Centers for public review and comment. The following year, after the review process concluded, ASHRAE released Standard 90.4-2016. Since ASHRAE 90.1 addresses overarching energy efficiency requirements that are applicable to all building types, ASHRAE designed Standard 90.4 to augment or replace sections in Standard 90.1.

ASHRAE 90.1-2016 is the normative reference to ASHRAE 90.4, creating a system that avoids doubling up on future revisions to the standard, minimizes any unintended redundancies, and ensures that the focus of ASHRAE 90.4 remains exclusive to data center facilities. Also, issuing updates to ASHRAE 90.1 will automatically update ASHRAE 90.4 for the referenced sections. In the same way, updates to ASHRAE 90.4 will not affect the language in ASHRAE 90.1.

Because many local jurisdictions operate on a 3-year cycle for updating their building codes, many are still using ASHRAE 90.1-2013 or earlier. The normative reference is ASHRAE 90.1-2016; however, the final say on an administrative matter like this will always fall to the authority having jurisdiction (AHJ).

Differences between 90.1 and 90.4

The biggest energy-related difference between a data center and a standard commercial building is that the annual electricity use attributable to information technology equipment (ITE) in a data center accounts for 75% to 90% Compare this to personal computers and office equipment in a commercial building, which accounts for 10% to 25%. (These numbers are based on typical design parameters for data centers and commercial office buildings and have been validated from operational measurements).

When analyzing energy use in a data center in the design stage, the primary areas of opportunity are the power and cooling systems. Because the type and configuration of ITE equipment may be unknown, engineers will use the total electrical demand in kilowatts, assumed to be a fixed value, during the preliminary stages of a project. This is one of the situations where Standard 90.1 presents a challenge to engineers in demonstrating energy-reduction strategies. Because the ITE load is the largest percentage of the overall electrical demand, the improvement in energy efficiency is just a small fraction of the total when comparing the baseline design against the proposed energy efficiency options for the cooling and electrical systems.

Performance-based approach

ASHRAE 90.4 uses a performance-based approach, which allows the engineer to develop energy-reduction strategies using computer-based modeling rather than taking a prescriptive approach. Not only does this result in more accurate comparisons, but it also accommodates for next-generation energy-efficient cooling solutions (and for the rapid change in computer technology).

Some of the provisions seem to especially encourage innovative solutions:

Onsite renewables or recovered energy. The standard allows for a credit to the annual energy use if onsite renewable energy generation is used or waste heat is recovered for other uses. Data centers can be ideal candidates for recovering energy, as the load tends to be relatively constant throughout the year. Also, when water-cooled computers are used with high-discharge water temperatures, the water can be used for building heating, boiler-water preheating, snow melting, or other thermal uses when the facility reaches the designed load.

Derivation of mechanical-load component (MLC) values. The MLC values in the tables in ASHRAE 90.4 are generic to allow multiple systems to qualify for the path. The MLC values are based on equipment currently available in the marketplace from multiple manufacturers which has been certified that it meets the minimum efficiency requirements using the Air Conditioning, Heating and Refrigeration Institute (AHRI) Product Performance Certification Program. This is the benchmark to meet minimum compliance, but ideally, the project would go beyond the minimum and demonstrate even greater energy-reduction potential.

Design conditions. The annualized MLC values for air systems are based on a delta T (temperature rise of the supply air) of 20°F and a return-air temperature of 85°F. However, the proposed design is not bound to these values if the design temperatures are ideal for with the performance characteristics of the coils, pumps, fan capacities, etc. This provision from the standard gives the engineer lots of room to innovate and propose nontraditional designs, such as water cooling of the ITE equipment.

Trade-off method. Sometimes mechanical and electrical systems have constraints that may disqualify them from meeting the MLC and electrical-loss component (ELC) values on their own merit. The standard allows, for example, to use a less efficient mechanical system offsetting it with a more efficient electrical system (and vice versa).

Key concepts of ASHRAE 90.4

Using ASHRAE 90.4 in combination with Standard 90.1 gives the engineer a completely new method for determining energy-consumption compliance for a data center facility. In Standard 90.4, ASHRAE introduces new terminology for demonstrating compliance: design and annual MLC and ELC. ASHRAE is careful to note that these values are not to be confused with PUE or partial PUE and are to be used only within the context of ASHRAE 90.4.

The standard includes compliance tables consisting of the maximum load components for each of the 19 ASHRAE climate zones. Assigning an energy efficiency target, either in the form of design or an annualized MLC to a specific climate zone, reinforces the inextricable link between climate and data center energy performance. Design strategies, such as elevated temperatures in the data center and air or water economization, are heavily dependent on the climate; when analyzing efficiency across the climate zones, the data will feed into the decision-making process to determine the location of the data center. While using the data to determine a location for the data center is not part of the process for determining compliance, it is valuable for the progress of the overall project.

Addressing reliability and energy efficiency

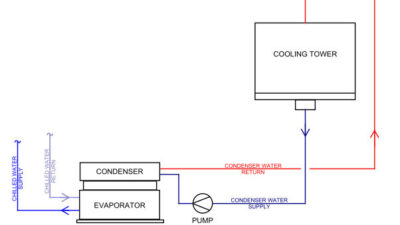

For data centers, one of the most distinctive requirements is designing systems with a very high degree of reliability. Simply speaking, one way to achieve this is by using redundant mechanical and electrical equipment. The redundant equipment will come online when a failure occurs or when performing maintenance so as not to compromise critical operations. Different engineers use different approaches based on their clients’ needs. Some will design in extra cooling units, pumps, chillers, etc., which run- all the time, cycling units on and off as necessary.

Other designs might have equipment to handle more stringent design conditions, such as ASHRAE’s 0.4% climate data (dry-bulb temperatures corresponding to the 0.4% annual cumulative frequency of occurrence). And yet others will use variable-speed motors to vary water and airflow, delivering the required cooling based on a changing ITE load.

Because these design approaches are quite different from one another, Table 6.2.1.2.1.2 in ASHRAE 90.4 provides methods for calculating MLC compliance under these scenarios.

A major difference from previous versions of 90.1 is the inclusion of tables focusing on electrical-component redundancy and losses for the various elements in the power-delivery chain. These tables demystify the process and help identify areas of inefficiency in the electrical system. The addition of these tables, emphasizing the interplay between reliability and efficiency, exemplifies how ASHRAE is addressing the nuances that, while seemingly minor, have a big impact on energy use.

What about the computers?

Because ASHRAE 90.4 is an energy standard for the data center building, it does not provide specific guidance for improving the energy efficiency of the ITE systems. Because the ITE systems will consume 75% to 90% of the data center’s annual energy, looking for ways to increase the efficiency of ITE systems is a priority.

ITE equipment manufacturers have made a marked improvement in reducing energy consumption while simultaneously improving performance. Advances in processor and memory have resulted in lowering servers’ energy use without sacrificing performance, as illustrated in benchmark data (Figures 1 and 2). This benchmark evaluates the power and performance characteristics of single servers and multi-node servers. However, the operation of the equipment will arguably have the largest impact on energy consumption. Certain operational strategies are very effective in reducing the quantity of necessary equipment while providing an increase in utilization efficiency (see below).

Energy-reducing operational strategies for information technology equipment (ITE)

- Virtualization

- Drive partitioning

- High-efficiency storage devices

- Proprietary application-consolidation algorithms

- High-efficiency chip sets

- Shared servers.

Another way to attain energy savings in ITE comes from different methods of operating the servers. Compute loads running on one server can be shifted to a second server, sending the first server into a very low power state while the second server now runs at a very optimal level. This can be done at the local data center level, or across data centers in diverse geographic locations.

Using water to cool the ITE equipment is another powerful strategy in reducing ITE energy. Because water is used to cool the internal components, fans in the servers will use less energy. Also, because the internal components have a much more uniform temperature, less energy is needed to power the components. Developing these energy-reduction strategies, and others like them, requires the collaboration of the mechanical and electrical building engineers and ITE teams, resulting in synergistic opportunities for further reducing data center energy use even beyond the requirements of ASHRAE 90.4.

For people working to improve energy efficiency in data centers, the release of ASHRAE 90.4-2016 was a watershed moment. In the United States, this is the first code-ready, technically robust standard which provides guidance on how to demonstrate that mechanical and electrical systems are conforming to the energy-efficiency requirements. This is no small feat, considering that data center mechanical/electrical systems can have a wide variety of design approaches and reliability requirements, especially as the ITE manufacturers continue to develop more efficient ITE equipment and systems, some requiring novel means of power and cooling.

Because ASHRAE 90.4 is a separate document from ASHRAE 90.1, as computer technology changes, the process to augment/revise ASHRAE 90.4 should be straightforward in light of its singular focus on data centers. Looking ahead, ASHRAE’s ongoing public review and comment processes (as a part of the continuous-maintenance process) will ensure that ASHRAE 90.4 will continue to be the vanguard in energy-efficient design in data centers.

Bill Kosik is a data center energy efficiency strategist. In the mission critical industry, he is a subject matter expert in research, analysis, strategy, and planning in the reduction of data center energy consumption, water use, and indirect greenhouse gas emissions. Kosik is a member of the Consulting-Specifying Engineer editorial advisory board.