While AI computing drives significant energy use and carbon impact, its waste heat can be recovered through advanced liquid-cooling and district energy systems.

Crunching the data for artificial intelligence (AI) computations requires a massive amount of energy consumption by high-performance computing facilities. This results in a hefty carbon footprint. Leveraging a byproduct of such facilities, however, can lead to synergistic energy use and improved sustainability.

The potential begins with the cooling needs of AI computational equipment, for which standard air cooling has become ill-suited due to ever-increasing high-density power demands. Taking its place are liquid cooling technologies such as immersion and direct-to-chip. These high-performance cooling systems generate significant waste heat. On certain campuses this waste heat can be recovered and pushed into district energy systems to heat neighboring facilities — greatly supporting overall sustainability and greenhouse gas reduction goals.

Such heat recovery could be in the form of direct heat into the existing campus heating distribution or it could be the catalyst for the deployment of a modern fifth-generation district system that uses heat pumps and distributed source/sinks.

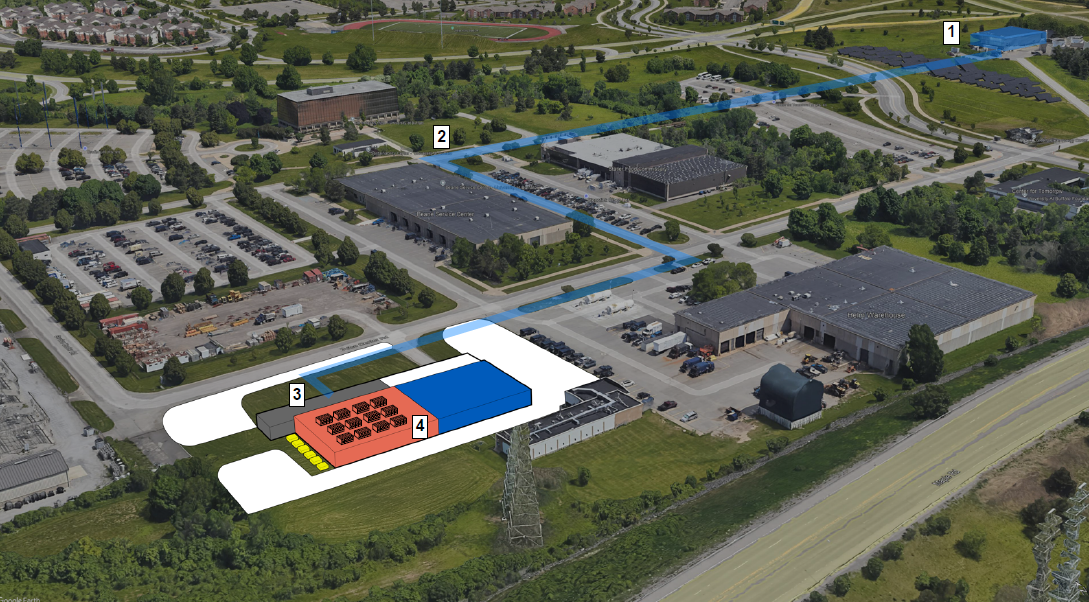

For example, a site plan reflects phase one of a higher education campus energy reuse project. Excess energy from the high performance computing facility (shown as No. 1) is delivered via buried chilled water infrastructure (No. 2) to the chilled water return (No. 3) within the central utility plant (No. 4). The energy is then distributed in the form of direct heat via the existing campus heating distribution or via a modern fifth-generation district system that uses heat pumps and distributed source/sinks.

Such a strategy also can be applied to private sector data centers, private research and development centers, as well as healthcare and federal institution campuses that have a high-performance computing facility. Even institutions without high-performance data centers are considering upgrading to AI computing and leveraging the excess energy to aid their long-term sustainability initiatives.

Beyond investigating the potential for synergistic energy use, engineers advising clients on an AI computing facility should also:

- Confirm that utility electrical service capacity is or will be available to the building for both Day 1 and for future growth.

- Ensure that a large portion of the programmed space is committed to generators, chillers, uninterruptible power supplies, switchgear, etc., to meet the needs of the high density of computations and the high overall capacity.

- Determine the number of redundant components and pathways needed within the data center.

- Specify high-density cooling — typically direct-liquid cooling and rack-specific cooling like rear door coolers — to adequately cool high rack densities that can push beyond 100 kilowatts/rack.

- Fine-tune system selection to ensure power use effectiveness, water use effectiveness and other sustainability goals are achieved.

- Provide the facility with the flexibility to respond to the ever-changing landscape of the computing world, where AI is still in its infancy.

As AI computing becomes increasingly central to mission-critical operations, leveraging its thermal byproduct offers a rare opportunity to turn a traditionally wasted output into a valuable campus asset — supporting energy efficiency, resilience and long-term carbon reduction.